This is the multi-page printable view of this section. Click here to print.

Create Nutanix cluster

- 1: Overview

- 2: Requirements for EKS Anywhere on Nutanix Cloud Infrastructure

- 3: Preparing Nutanix Cloud Infrastructure for EKS Anywhere

- 4: Create Nutanix cluster

- 5: Configure for Nutanix

- 6:

1 - Overview

Creating a Nutanix cluster

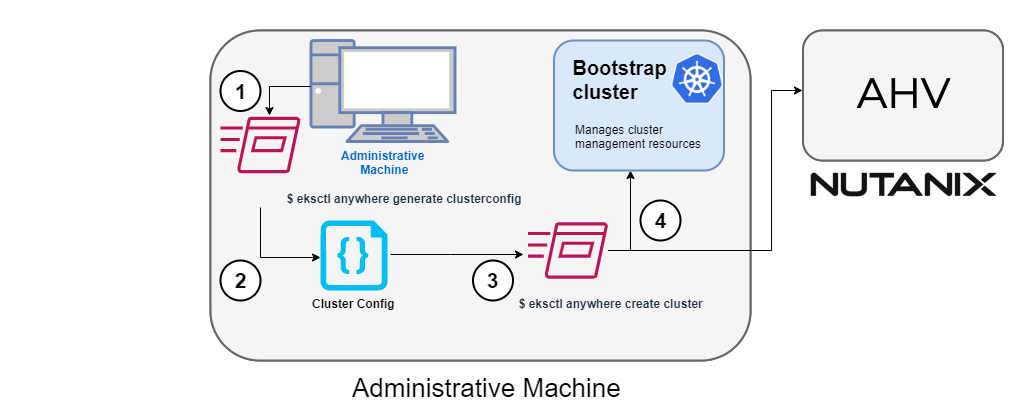

The following diagram illustrates the cluster creation process for the Nutanix provider.

Start creating a Nutanix cluster

1. Generate a config file for Nutanix

Identify the provider (--provider nutanix) and the cluster name in the eksctl anywhere generate clusterconfig command and direct the output to a cluster config .yaml file.

2. Modify the config file

Modify the generated cluster config file to suit your situation. Details about this config file can be found on the Nutanix Config page.

3. Launch the cluster creation

After modifying the cluster configuration file, run the eksctl anywhere cluster create command, providing the cluster config.

The verbosity can be increased to see more details on the cluster creation process (-v=9 provides maximum verbosity).

4. Create bootstrap cluster

The cluster creation process starts with creating a temporary Kubernetes bootstrap cluster on the Administrative machine.

First, the cluster creation process runs a series of commands to validate the Nutanix environment:

- Checks that the Nutanix environment is available.

- Authenticates the Nutanix provider to the Nutanix environment using the supplied Prism Central endpoint information and credentials.

For each of the NutanixMachineConfig objects, the following validations are performed:

- Validates the provided resource configuration (CPU, memory, storage)

- Validates the Nutanix subnet

- Validates the Nutanix Prism Element cluster

- Validates the image

- (Optional) Validates the Nutanix project

If all validations pass, you will see this message:

✅ Nutanix Provider setup is valid

During bootstrap cluster creation, the following messages will be shown:

Creating new bootstrap cluster

Provider specific pre-capi-install-setup on bootstrap cluster

Installing cluster-api providers on bootstrap cluster

Provider specific post-setup

Next, the Nutanix provider will create the machines in the Nutanix environment.

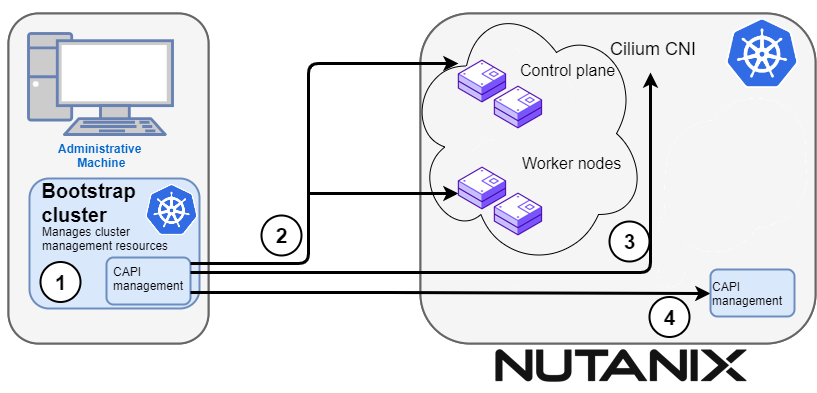

Continuing cluster creation

The following diagram illustrates the activities that occur next:

1. CAPI management

Cluster API (CAPI) management will orchestrate the creation of the target cluster in the Nutanix environment.

Creating new workload cluster

2. Create the target cluster nodes

The control plane and worker nodes will be created and configured using the Nutanix provider.

3. Add Cilium networking

Add Cilium as the CNI plugin to use for networking between the cluster services and pods.

Installing networking on workload cluster

4. Moving cluster management to target cluster

CAPI components are installed on the target cluster. Next, cluster management is moved from the bootstrap cluster to the target cluster.

Creating EKS-A namespace

Installing cluster-api providers on workload cluster

Installing EKS-A secrets on workload cluster

Installing resources on management cluster

Moving cluster management from bootstrap to workload cluster

Installing EKS-A custom components (CRD and controller) on workload cluster

Installing EKS-D components on workload cluster

Creating EKS-A CRDs instances on workload cluster

4. Saving cluster configuration file

The cluster configuration file is saved.

Writing cluster config file

5. Delete bootstrap cluster

The bootstrap cluster is no longer needed and is deleted when the target cluster is up and running:

The target cluster can now be used as either:

- A standalone cluster (to run workloads) or

- A management cluster (to optionally create one or more workload clusters)

Creating workload clusters (optional)

The target cluster acts as a management cluster. One or more workload clusters can be managed by this management cluster as described in Create separate workload clusters :

- Use

eksctlto generate a cluster config file for the new workload cluster. - Modify the cluster config with a new cluster name and different Nutanix resources.

- Use

eksctlto create the new workload cluster from the new cluster config file.

2 - Requirements for EKS Anywhere on Nutanix Cloud Infrastructure

To run EKS Anywhere, you will need:

Prepare Administrative machine

Set up an Administrative machine as described in Install EKS Anywhere .

Prepare a Nutanix environment

To prepare a Nutanix environment to run EKS Anywhere, you need the following:

-

A Nutanix environment running AOS 5.20.4+ with AHV and Prism Central 2022.1+

-

Capacity to deploy 6-10 VMs

-

DHCP service or Nutanix IPAM running in your environment in the primary VM network for your workload cluster

-

A VM image imported into the Prism Image Service for the workload VMs

-

User credentials to create VMs and attach networks, etc

-

One IP address routable from cluster but excluded from DHCP/IPAM offering. This IP address is to be used as the Control Plane Endpoint IP

Below are some suggestions to ensure that this IP address is never handed out by your DHCP server.

You may need to contact your network engineer.

- Pick an IP address reachable from cluster subnet which is excluded from DHCP range OR

- Alter DHCP ranges to leave out an IP address(s) at the top and/or the bottom of the range OR

- Create an IP reservation for this IP on your DHCP server. This is usually accomplished by adding a dummy mapping of this IP address to a non-existent mac address.

- Block an IP address from the Nutanix IPAM managed network using aCLI

Each VM will require:

- 2 vCPUs

- 4GB RAM

- 40GB Disk

The administrative machine and the target workload environment will need network access (TCP/443) to:

- Prism Central endpoint (must be accessible to EKS Anywhere clusters)

- Prism Element Data Services IP and CVM endpoints (for CSI storage connections)

- public.ecr.aws (for pulling EKS Anywhere container images)

- anywhere-assets.eks.amazonaws.com (to download the EKS Anywhere binaries and manifests)

- distro.eks.amazonaws.com (to download EKS Distro binaries and manifests)

- d2glxqk2uabbnd.cloudfront.net (for EKS Anywhere and EKS Distro ECR container images)

- api.ecr.us-west-2.amazonaws.com (for EKS Anywhere package authentication matching your region)

- d5l0dvt14r5h8.cloudfront.net (for EKS Anywhere package ECR container images)

- api.github.com (only if GitOps is enabled)

Nutanix information needed before creating the cluster

You need to get the following information before creating the cluster:

-

Static IP Addresses: You will need one IP address for the management cluster control plane endpoint, and a separate one for the controlplane of each workload cluster you add.

Let’s say you are going to have the management cluster and two workload clusters. For those, you would need three IP addresses, one for each. All of those addresses will be configured the same way in the configuration file you will generate for each cluster.

A static IP address will be used for control plane API server HA in each of your EKS Anywhere clusters. Choose IP addresses in your network range that do not conflict with other VMs and make sure they are excluded from your DHCP offering.

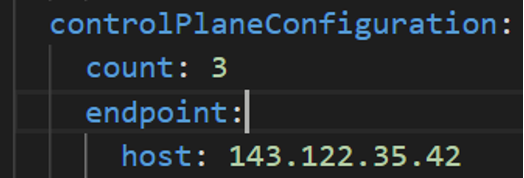

An IP address will be the value of the property

controlPlaneConfiguration.endpoint.hostin the config file of the management cluster. A separate IP address must be assigned for each workload cluster.

-

Prism Central FQDN or IP Address: The Prism Central fully qualified domain name or IP address.

-

Prism Element Cluster Name: The AOS cluster to deploy the EKS Anywhere cluster on.

-

VM Subnet Name: The VM network to deploy your EKS Anywhere cluster on.

-

Machine Template Image Name: The VM image to use for your EKS Anywhere cluster.

-

additionalTrustBundle (required if using a self-signed PC SSL certificate): The PEM encoded CA trust bundle of the root CA that issued the certificate for Prism Central.

3 - Preparing Nutanix Cloud Infrastructure for EKS Anywhere

Certain resources must be in place with appropriate user permissions to create an EKS Anywhere cluster using the Nutanix provider.

Configuring Nutanix User

You need a Prism Admin user to create EKS Anywhere clusters on top of your Nutanix cluster.

Build Nutanix AHV node images

Follow the steps outlined in artifacts to create a Ubuntu-based image for Nutanix AHV and import it into the AOS Image Service.

4 - Create Nutanix cluster

EKS Anywhere supports a Nutanix Cloud Infrastructure (NCI) provider for EKS Anywhere deployments. This document walks you through setting up EKS Anywhere on Nutanix Cloud Infrastructure with AHV in a way that:

- Deploys an initial cluster in your Nutanix environment. That cluster can be used as a self-managed cluster (to run workloads) or a management cluster (to create and manage other clusters)

- Deploys zero or more workload clusters from the management cluster

If your initial cluster is a management cluster, it is intended to stay in place so you can use it later to modify, upgrade, and delete workload clusters. Using a management cluster makes it faster to provision and delete workload clusters. It also lets you keep NCI credentials for a set of clusters in one place: on the management cluster. The alternative is to simply use your initial cluster to run workloads. See Cluster topologies for details.

Important

Creating an EKS Anywhere management cluster is the recommended model. Separating management features into a separate, persistent management cluster provides a cleaner model for managing the lifecycle of workload clusters (to create, upgrade, and delete clusters), while workload clusters run user applications. This approach also reduces provider permissions for workload clusters.Note: Before you create your cluster, you have the option of validating the EKS Anywhere bundle manifest container images by following instructions in the Verify Cluster Images page.

Prerequisite Checklist

EKS Anywhere needs to:

- Be run on an Admin machine that has certain machine requirements .

- Have certain resources from your Nutanix deployment available.

- Have some preparation done before creating an EKS Anywhere cluster.

Also, see the Ports and protocols page for information on ports that need to be accessible from control plane, worker, and Admin machines.

Steps

The following steps are divided into two sections:

- Create an initial cluster (used as a management or self-managed cluster)

- Create zero or more workload clusters from the management cluster

Create an initial cluster

Follow these steps to create an EKS Anywhere cluster that can be used either as a management cluster or as a self-managed cluster (for running workloads itself).

-

Optional Configuration

Set License Environment Variable

Add a license to any cluster for which you want to receive paid support. If you are creating a licensed cluster, set and export the license variable (see License cluster if you are licensing an existing cluster):

export EKSA_LICENSE='my-license-here'After you have created your

eksa-mgmt-cluster.yamland set your credential environment variables, you will be ready to create the cluster.Configure Curated Packages

The Amazon EKS Anywhere Curated Packages are only available to customers with the Amazon EKS Anywhere Enterprise Subscription. To request a free trial, talk to your Amazon representative or connect with one here . Cluster creation will succeed if authentication is not set up, but some warnings may be genered. Detailed package configurations can be found here .

If you are going to use packages, set up authentication. These credentials should have limited capabilities :

export EKSA_AWS_ACCESS_KEY_ID="your*access*id" export EKSA_AWS_SECRET_ACCESS_KEY="your*secret*key" export EKSA_AWS_REGION="us-west-2" -

Generate an initial cluster config (named

mgmtfor this example):CLUSTER_NAME=mgmt eksctl anywhere generate clusterconfig $CLUSTER_NAME \ --provider nutanix > eksa-mgmt-cluster.yaml -

Modify the initial cluster config (

eksa-mgmt-cluster.yaml) as follows:- Refer to Nutanix configuration for information on configuring this cluster config for a Nutanix provider.

- Add Optional configuration settings as needed.

- Create at least three control plane nodes, three worker nodes, and three etcd nodes, to provide high availability and rolling upgrades.

-

Set Credential Environment Variables

Before you create the initial cluster, you will need to set and export these environment variables for your Nutanix Prism Central user name and password. Make sure you use single quotes around the values so that your shell does not interpret the values:

export EKSA_NUTANIX_USERNAME='billy' export EKSA_NUTANIX_PASSWORD='t0p$ecret'Note

If you have a username in the form ofdomain_name/user_name, you must specify it asuser_name@domain_nameto avoid errors in cluster creation. For example,nutanix.local/adminshould be specified asadmin@nutanix.local. -

Create cluster

For a regular cluster create (with internet access), type the following:

eksctl anywhere create cluster \ -f eksa-mgmt-cluster.yaml \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creationFor an airgapped cluster create, follow Preparation for airgapped deployments instructions, then type the following:

eksctl anywhere create cluster \ -f eksa-mgmt-cluster.yaml \ --bundles-override ./eks-anywhere-downloads/bundle-release.yaml \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -

Once the cluster is created, you can access it with the generated

KUBECONFIGfile in your local directory:export KUBECONFIG=${PWD}/${CLUSTER_NAME}/${CLUSTER_NAME}-eks-a-cluster.kubeconfig -

Check the cluster nodes:

To check that the cluster is ready, list the machines to see the control plane, and worker nodes:

kubectl get machines -n eksa-systemExample command output

NAME CLUSTER NODENAME PROVIDERID PHASE AGE VERSION mgmt-4gtt2 mgmt mgmt-control-plane-1670343878900-2m4ln nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 mgmt-d42xn mgmt mgmt-control-plane-1670343878900-jbfxt nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 mgmt-md-0-9868m mgmt mgmt-md-0-1670343878901-lkmxw nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 mgmt-md-0-njpk2 mgmt mgmt-md-0-1670343878901-9clbz nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 mgmt-md-0-p4gp2 mgmt mgmt-md-0-1670343878901-mbktx nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 mgmt-zkwrr mgmt mgmt-control-plane-1670343878900-jrdkk nutanix://xxxx Running 11m v1.24.7-eks-1-24-4 -

Check the initial cluster’s CRD:

To ensure you are looking at the initial cluster, list the cluster CRD to see that the name of its management cluster is itself:

kubectl get clusters mgmt -o yamlExample command output

... kubernetesVersion: "1.28" managementCluster: name: mgmt workerNodeGroupConfigurations: ...Note

The initial cluster is now ready to deploy workload clusters. However, if you just want to use it to run workloads, you can deploy pod workloads directly on the initial cluster without deploying a separate workload cluster and skip the section on running separate workload clusters. To make sure the cluster is ready to run workloads, run the test application in the Deploy test workload section.

Create separate workload clusters

Follow these steps if you want to use your initial cluster to create and manage separate workload clusters.

-

Set License Environment Variable (Optional)

Add a license to any cluster for which you want to receive paid support. If you are creating a licensed cluster, set and export the license variable (see License cluster if you are licensing an existing cluster):

export EKSA_LICENSE='my-license-here' -

Generate a workload cluster config:

CLUSTER_NAME=w01 eksctl anywhere generate clusterconfig $CLUSTER_NAME \ --provider nutanix > eksa-w01-cluster.yamlRefer to the initial config described earlier for the required and optional settings. Ensure workload cluster object names (

Cluster,NutanixDatacenterConfig,NutanixMachineConfig, etc.) are distinct from management cluster object names. -

Be sure to set the

managementClusterfield to identify the name of the management cluster.For example, the management cluster, mgmt is defined for our workload cluster w01 as follows:

apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: Cluster metadata: name: w01 spec: managementCluster: name: mgmt -

Create a workload cluster

To create a new workload cluster from your management cluster run this command, identifying:

- The workload cluster YAML file

- The initial cluster’s kubeconfig (this causes the workload cluster to be managed from the management cluster)

eksctl anywhere create cluster \ -f eksa-w01-cluster.yaml \ --kubeconfig mgmt/mgmt-eks-a-cluster.kubeconfig \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creationAs noted earlier, adding the

--kubeconfigoption tellseksctlto use the management cluster identified by that kubeconfig file to create a different workload cluster. -

Check the workload cluster:

You can now use the workload cluster as you would any Kubernetes cluster. Change your kubeconfig to point to the new workload cluster (for example,

w01), then run the test application with:export CLUSTER_NAME=w01 export KUBECONFIG=${PWD}/${CLUSTER_NAME}/${CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl apply -f "https://anywhere.eks.amazonaws.com/manifests/hello-eks-a.yaml"Verify the test application in the deploy test application section.

-

Add more workload clusters:

To add more workload clusters, go through the same steps for creating the initial workload, copying the config file to a new name (such as

eksa-w02-cluster.yaml), modifying resource names, and running the create cluster command again.

Next steps:

-

See the Cluster management section for more information on common operational tasks like scaling and deleting the cluster.

-

See the Package management section for more information on post-creation curated packages installation.

5 - Configure for Nutanix

This is a generic template with detailed descriptions below for reference.

The following additional optional configuration can also be included:

- CNI

- IAM Roles for Service Accounts

- IAM Authenticator

- OIDC

- Registry Mirror

- Proxy

- Gitops

- Machine Health Checks

apiVersion: anywhere.eks.amazonaws.com/v1alpha1

kind: Cluster

metadata:

name: mgmt

namespace: default

spec:

clusterNetwork:

cniConfig:

cilium: {}

pods:

cidrBlocks:

- 192.168.0.0/16

services:

cidrBlocks:

- 10.96.0.0/16

controlPlaneConfiguration:

count: 3

endpoint:

host: ""

machineGroupRef:

kind: NutanixMachineConfig

name: mgmt-cp-machine

datacenterRef:

kind: NutanixDatacenterConfig

name: nutanix-cluster

externalEtcdConfiguration:

count: 3

machineGroupRef:

kind: NutanixMachineConfig

name: mgmt-etcd

kubernetesVersion: "1.28"

workerNodeGroupConfigurations:

- count: 1

machineGroupRef:

kind: NutanixMachineConfig

name: mgmt-machine

name: md-0

---

apiVersion: anywhere.eks.amazonaws.com/v1alpha1

kind: NutanixDatacenterConfig

metadata:

name: nutanix-cluster

namespace: default

spec:

endpoint: pc01.cloud.internal

port: 9440

credentialRef:

kind: Secret

name: nutanix-credentials

---

apiVersion: anywhere.eks.amazonaws.com/v1alpha1

kind: NutanixMachineConfig

metadata:

annotations:

anywhere.eks.amazonaws.com/control-plane: "true"

name: mgmt-cp-machine

namespace: default

spec:

cluster:

name: nx-cluster-01

type: name

image:

name: eksa-ubuntu-2004-kube-v1.28

type: name

memorySize: 4Gi

osFamily: ubuntu

subnet:

name: vm-network

type: name

systemDiskSize: 40Gi

project:

type: name

name: my-project

users:

- name: eksa

sshAuthorizedKeys:

- ssh-rsa AAAA…

vcpuSockets: 2

vcpusPerSocket: 1

additionalCategories:

- key: my-category

value: my-category-value

---

apiVersion: anywhere.eks.amazonaws.com/v1alpha1

kind: NutanixMachineConfig

metadata:

name: mgmt-etcd

namespace: default

spec:

cluster:

name: nx-cluster-01

type: name

image:

name: eksa-ubuntu-2004-kube-v1.28

type: name

memorySize: 4Gi

osFamily: ubuntu

subnet:

name: vm-network

type: name

systemDiskSize: 40Gi

project:

type: name

name: my-project

users:

- name: eksa

sshAuthorizedKeys:

- ssh-rsa AAAA…

vcpuSockets: 2

vcpusPerSocket: 1

---

apiVersion: anywhere.eks.amazonaws.com/v1alpha1

kind: NutanixMachineConfig

metadata:

name: mgmt-machine

namespace: default

spec:

cluster:

name: nx-cluster-01

type: name

image:

name: eksa-ubuntu-2004-kube-v1.28

type: name

memorySize: 4Gi

osFamily: ubuntu

subnet:

name: vm-network

type: name

systemDiskSize: 40Gi

project:

type: name

name: my-project

users:

- name: eksa

sshAuthorizedKeys:

- ssh-rsa AAAA…

vcpuSockets: 2

vcpusPerSocket: 1

---

Cluster Fields

name (required)

Name of your cluster mgmt in this example.

clusterNetwork (required)

Network configuration.

clusterNetwork.cniConfig (required)

CNI plugin configuration. Supports cilium.

clusterNetwork.cniConfig.cilium.policyEnforcementMode (optional)

Optionally specify a policyEnforcementMode of default, always or never.

clusterNetwork.cniConfig.cilium.egressMasqueradeInterfaces (optional)

Optionally specify a network interface name or interface prefix used for masquerading. See EgressMasqueradeInterfaces option.

clusterNetwork.cniConfig.cilium.skipUpgrade (optional)

When true, skip Cilium maintenance during upgrades. Also see Use a custom CNI.

clusterNetwork.cniConfig.cilium.routingMode (optional)

Optionally specify the routing mode. Accepts default and direct. Also see RoutingMode

option.

clusterNetwork.cniConfig.cilium.ipv4NativeRoutingCIDR (optional)

Optionally specify the CIDR to use when RoutingMode is set to direct. When specified, Cilium assumes networking for this CIDR is preconfigured and hands traffic destined for that range to the Linux network stack without applying any SNAT.

clusterNetwork.cniConfig.cilium.ipv6NativeRoutingCIDR (optional)

Optionally specify the IPv6 CIDR to use when RoutingMode is set to direct. When specified, Cilium assumes networking for this CIDR is preconfigured and hands traffic destined for that range to the Linux network stack without applying any SNAT.

clusterNetwork.pods.cidrBlocks[0] (required)

The pod subnet specified in CIDR notation. Only 1 pod CIDR block is permitted. The CIDR block should not conflict with the host or service network ranges.

clusterNetwork.services.cidrBlocks[0] (required)

The service subnet specified in CIDR notation. Only 1 service CIDR block is permitted. This CIDR block should not conflict with the host or pod network ranges.

clusterNetwork.dns.resolvConf.path (optional)

File path to a file containing a custom DNS resolver configuration.

controlPlaneConfiguration (required)

Specific control plane configuration for your Kubernetes cluster.

controlPlaneConfiguration.count (required)

Number of control plane nodes

controlPlaneConfiguration.machineGroupRef (required)

Refers to the Kubernetes object with Nutanix specific configuration for your nodes. See NutanixMachineConfig fields below.

controlPlaneConfiguration.endpoint.host (required)

A unique IP you want to use for the control plane VM in your EKS Anywhere cluster. Choose an IP in your network range that does not conflict with other VMs.

NOTE: This IP should be outside the network DHCP range as it is a floating IP that gets assigned to one of the control plane nodes for kube-apiserver loadbalancing. Suggestions on how to ensure this IP does not cause issues during cluster creation process are here .

workerNodeGroupConfigurations (required)

This takes in a list of node groups that you can define for your workers. You may define one or more worker node groups.

workerNodeGroupConfigurations[*].count (optional)

Number of worker nodes. (default: 1) It will be ignored if the cluster autoscaler curated package

is installed and autoscalingConfiguration is used to specify the desired range of replicas.

Refers to troubleshooting machine health check remediation not allowed and choose a sufficient number to allow machine health check remediation.

workerNodeGroupConfigurations[*].machineGroupRef (required)

Refers to the Kubernetes object with Nutanix specific configuration for your nodes. See NutanixMachineConfig fields below.

workerNodeGroupConfigurations[*].name (required)

Name of the worker node group (default: md-0)

workerNodeGroupConfigurations[*].autoscalingConfiguration.minCount (optional)

Minimum number of nodes for this node group’s autoscaling configuration.

workerNodeGroupConfigurations[*].autoscalingConfiguration.maxCount (optional)

Maximum number of nodes for this node group’s autoscaling configuration.

workerNodeGroupConfigurations[*].kubernetesVersion (optional)

The Kubernetes version you want to use for this worker node group. Supported values: 1.28, 1.27, 1.26, 1.25, 1.24

externalEtcdConfiguration.count (optional)

Number of etcd members

externalEtcdConfiguration.machineGroupRef (optional)

Refers to the Kubernetes object with Nutanix specific configuration for your etcd members. See NutanixMachineConfig fields below.

datacenterRef (required)

Refers to the Kubernetes object with Nutanix environment specific configuration. See NutanixDatacenterConfig fields below.

kubernetesVersion (required)

The Kubernetes version you want to use for your cluster. Supported values: 1.28, 1.27, 1.26, 1.25, 1.24

NutanixDatacenterConfig Fields

endpoint (required)

The Prism Central server fully qualified domain name or IP address. If the server IP is used, the PC SSL certificate must have an IP SAN configured.

port (required)

The Prism Central server port. (Default: 9440)

credentialRef (required)

Reference to the Kubernetes secret that contains the Prism Central credentials.

insecure (optional)

Set insecure to true if the Prism Central server does not have a valid certificate. This is not recommended for production use cases. (Default: false)

additionalTrustBundle (optional; required if using a self-signed PC SSL certificate)

The PEM encoded CA trust bundle.

The additionalTrustBundle needs to be populated with the PEM-encoded x509 certificate of the Root CA that issued the certificate for Prism Central. Suggestions on how to obtain this certificate are here

.

Example:

additionalTrustBundle: |

-----BEGIN CERTIFICATE-----

<certificate string>

-----END CERTIFICATE-----

-----BEGIN CERTIFICATE-----

<certificate string>

-----END CERTIFICATE-----

NutanixMachineConfig Fields

cluster (required)

Reference to the Prism Element cluster.

cluster.type (required)

Type to identify the Prism Element cluster. (Permitted values: name or uuid)

cluster.name (required)

Name of the Prism Element cluster.

cluster.uuid (required)

UUID of the Prism Element cluster.

image (required)

Reference to the OS image used for the system disk.

image.type (required)

Type to identify the OS image. (Permitted values: name or uuid)

image.name (name or UUID required)

Name of the image

The image.name must contain the Cluster.Spec.KubernetesVersion or Cluster.Spec.WorkerNodeGroupConfiguration[].KubernetesVersion version (in case of modular upgrade). For example, if the Kubernetes version is 1.24, image.name must include 1.24, 1_24, 1-24 or 124.

image.uuid (name or UUID required)

UUID of the image

The name of the image associated with the uuid must contain the Cluster.Spec.KubernetesVersion or Cluster.Spec.WorkerNodeGroupConfiguration[].KubernetesVersion version (in case of modular upgrade). For example, if the Kubernetes version is 1.24, the name associated with image.uuid field must include 1.24, 1_24, 1-24 or 124.

memorySize (optional)

Size of RAM on virtual machines (Default: 4Gi)

osFamily (optional)

Operating System on virtual machines. Permitted values: ubuntu and redhat. (Default: ubuntu)

subnet (required)

Reference to the subnet to be assigned to the VMs.

subnet.name (name or UUID required)

Name of the subnet.

subnet.type (required)

Type to identify the subnet. (Permitted values: name or uuid)

subnet.uuid (name or UUID required)

UUID of the subnet.

systemDiskSize (optional)

Amount of storage assigned to the system disk. (Default: 40Gi)

vcpuSockets (optional)

Amount of vCPU sockets. (Default: 2)

vcpusPerSocket (optional)

Amount of vCPUs per socket. (Default: 1)

project (optional)

Reference to an existing project used for the virtual machines.

project.type (required)

Type to identify the project. (Permitted values: name or uuid)

project.name (name or UUID required)

Name of the project

project.uuid (name or UUID required)

UUID of the project

additionalCategories (optional)

Reference to a list of existing Nutanix Categories to be assigned to virtual machines.

additionalCategories[0].key

Nutanix Category to add to the virtual machine.

additionalCategories[0].value

Value of the Nutanix Category to add to the virtual machine

users (optional)

The users you want to configure to access your virtual machines. Only one is permitted at this time.

users[0].name (optional)

The name of the user you want to configure to access your virtual machines through ssh.

The default is eksa if osFamily=ubuntu

users[0].sshAuthorizedKeys (optional)

The SSH public keys you want to configure to access your virtual machines through ssh (as described below). Only 1 is supported at this time.

users[0].sshAuthorizedKeys[0] (optional)

This is the SSH public key that will be placed in authorized_keys on all EKS Anywhere cluster VMs so you can ssh into

them. The user will be what is defined under name above. For example:

ssh -i <private-key-file> <user>@<VM-IP>

The default is generating a key in your $(pwd)/<cluster-name> folder when not specifying a value

6 -

- Prism Central endpoint (must be accessible to EKS Anywhere clusters)

- Prism Element Data Services IP and CVM endpoints (for CSI storage connections)

- public.ecr.aws (for pulling EKS Anywhere container images)

- anywhere-assets.eks.amazonaws.com (to download the EKS Anywhere binaries and manifests)

- distro.eks.amazonaws.com (to download EKS Distro binaries and manifests)

- d2glxqk2uabbnd.cloudfront.net (for EKS Anywhere and EKS Distro ECR container images)

- api.ecr.us-west-2.amazonaws.com (for EKS Anywhere package authentication matching your region)

- d5l0dvt14r5h8.cloudfront.net (for EKS Anywhere package ECR container images)

- api.github.com (only if GitOps is enabled)